Can AI Spot Lies in Witness Testimony?

AI flags inconsistencies in witness testimony but struggles with truth detection, bias, transparency, and legal admissibility.

AI shows promise in analyzing witness testimony, but it’s not ready to replace human judgment. Here’s what you need to know:

- AI's Strengths: It categorizes witness confidence levels, processes large datasets, and analyzes speech, facial expressions, and behavior. For instance, a 2024 study showed AI classified confidence statements with 71% accuracy, and high-confidence identifications were 90% accurate.

- Challenges: AI struggles with detecting truthful statements (19.5% accuracy in one study), suffers from "lie bias", and lacks transparency. Legal admissibility is also a hurdle, as courts question AI's reliability and fairness.

- Ethical Concerns: Current systems rely on contested theories like micro-expressions, and limited training data can lead to biased outcomes.

- Comparison to Polygraphs: While polygraphs are less scalable and more intrusive, they match or exceed AI in accuracy (~87%). AI, however, analyzes data faster and at lower costs.

AI could assist legal professionals and court coverage by flagging inconsistencies, but it’s not ready for courtroom decisions. Improvements in transparency, accuracy, and ethical practices are needed before widespread use.

The Power–and Limits of–AI in Detecting Lies | Xunyu Chen | TEDxYouth@RVA

How AI Analyzes Witness Testimony

AI examines both the content and delivery of witness testimony by analyzing speech patterns, facial expressions, and body language. Modern systems use a combination of data streams - known as multimodal analysis - to evaluate credibility. This blend of verbal and non-verbal signals sets AI apart from older methods, providing a more nuanced approach to criminal investigations. Let’s break down how these different modalities contribute to detecting deception.

Speech and Voice Analysis

AI leverages Natural Language Processing (NLP) to uncover verbal fillers, hesitations, and inconsistencies, while Voice Stress Analysis evaluates pitch, tone, and pauses. For example, in December 2025, researchers Twisha Patel and Daxa Vekariya from Parul University introduced a lightweight Convolutional Neural Network (CNN) model that combined NLP with facial analysis. Tested on the "Real-Life Deception Detection" dataset, their model achieved an impressive 96% accuracy rate while requiring fewer computing resources than traditional systems. This study highlights how integrating linguistic analysis with visual cues can improve deception detection. But speech is just one piece of the puzzle - facial expressions provide another layer of insight.

Facial Expression and Microexpression Analysis

Facial expressions are central to AI's ability to assess credibility. Algorithms trained on videos of deceptive behavior can detect subtle micro-expressions and emotional leaks that often go unnoticed by humans. A notable example occurred in October 2025 when the AI tool TruthOrLie.ai analyzed a 2007 "60 Minutes" interview featuring former New York Yankees player Alex Rodriguez. The system flagged his denial of using performance-enhancing drugs as deceptive, claiming an internal testing accuracy of over 70%, which is significantly higher than the average human accuracy of 54%. Some legal AI tools now even provide real-time feedback during depositions, offering credibility metrics like a "four-bar lie rating" to help attorneys gauge witness reliability.

Behavioral Pattern Recognition

AI also monitors behavioral signals such as eye movements, pupil dilation, blink rates, body stiffness, and Galvanic Skin Response (GSR) to detect stress and cognitive strain. In 2025, researchers at Symbiosis International Deemed University developed the CogniModal-D dataset, which focuses on the Indian population and includes over 100 participants across three age groups. By combining data from seven modalities - like EEG, GSR, and video - they achieved a 15% improvement in detecting deceptive behavior during mock crime interrogations compared to single-modality approaches. This research underscores the importance of using multiple behavioral cues, as no single signal is sufficient to determine deception on its own.

Benefits of AI in Lie Detection

AI brings a range of advantages to analyzing witness testimony that traditional methods simply can't provide. One of the biggest strengths is objectivity - AI systems assess statements without the contextual biases that often influence human investigators. As Paul Heaton, Academic Director of the Quattrone Center at the University of Pennsylvania Carey Law School, puts it:

The AI developed by the researchers offers a neutral method for assessing such statements free of contextual bias, leveraging information from thousands of prior eyewitness decisions.

Another key benefit is consistency. Unlike humans, who may interpret situations differently depending on various factors, AI applies the same criteria every time. Research from Penn Carey Law School highlights how this standardized approach enhances the reliability of evaluating eyewitness confidence statements, replacing subjective judgment with a more uniform framework.

AI also shines in its ability to process large datasets quickly, improving how credibility assessments are handled. For example, in July 2024, Alicia von Schenk and her team at the University of Würzburg used Google's BERT language model to train an AI on 1,536 statements from 768 participants. The system achieved a 67% accuracy rate in detecting lies - far better than the human average of 50%. Von Schenk noted the scalability of AI as a game-changer:

Imperfect AI tools stand to have an even greater impact because they are so easy to scale. You can only polygraph so many people in a day. The scope for AI lie detection is almost limitless by comparison.

This scalability means AI can handle tasks like screening large volumes of social media posts, job applications, or witness statements - work that would be overwhelming for manual review. By automating these initial screenings, AI allows human investigators to focus their attention on the most critical or suspicious cases flagged by the system.

Limitations and Challenges of AI in Courtrooms

AI's use in courtrooms faces several challenges, including accuracy issues, ethical concerns, and legal barriers that complicate its adoption.

Accuracy and Reliability Issues

AI's ability to detect lies is far from perfect. While it identifies falsehoods with an 85.8% accuracy rate, it struggles to recognize truthful statements, doing so only 19.5% of the time. This discrepancy is due to "lie bias", where AI systems are more inclined to assume deception compared to human evaluators. The result? A troubling number of false accusations.

Large language models also falter in legal research. They "hallucinate" answers - essentially fabricating information - in 69% to 88% of legal queries. Even specialized legal AI tools are not immune. Lexis+ AI, despite marketing itself as "100% hallucination-free", produced errors in 17% of queries, while Westlaw AI-Assisted Research had an even higher error rate of 33%. Professor Maura Grossman of the University of Waterloo highlights the problem:

We assume that because these large language models are so fluent, it also means that they're accurate. We all sort of slip into that trusting mode because it sounds authoritative.

Another issue is the lack of diverse training data. For example, the "Silent Talker" system was trained on a mere 32 individuals, excluding Black and Hispanic participants entirely. This limited dataset led to inconsistent results across different ethnicities, age groups, and even when subjects wore eyeglasses.

But technical flaws are just one side of the coin. Legal and ethical challenges further complicate AI's role in the courtroom.

Legal Admissibility and Ethical Questions

Courts are increasingly skeptical of AI-generated evidence. Under Federal Rule of Evidence 801(b), a "declarant" must be a person, raising a fundamental question: can AI outputs even qualify as admissible statements? As family law attorney Jonathan D. Steele puts it:

You can't put Alexa or ChatGPT on the stand and ask, 'Were you lying or hallucinating when you said this?'

The risks of relying on AI were underscored in December 2025, when the law firm Goldberg Segalla and attorney Larry Mason were fined $59,500 by Cook County Judge Thomas Cushing. The penalty came after attorney Danielle Malaty used ChatGPT to research a lead paint poisoning case. The AI fabricated a case - "Mack v. Anderson" - along with 14 other false quotes.

The "black box" problem adds another layer of complexity. AI developers often cite trade secrets to avoid revealing how their algorithms work. This lack of transparency makes it impossible for opposing counsel to challenge the logic behind AI-generated evidence, such as a credibility score. This secrecy clashes with the adversarial nature of legal proceedings, where evidence must be open to scrutiny. To address this, some jurisdictions now require pretrial hearings to assess the reliability of AI-generated evidence. For instance, a New York court began implementing such hearings in 2025/2026.

Ethical concerns also loom large. There is no scientific consensus that specific facial expressions or behaviors reliably indicate deception. Yet many AI systems rely on theories like Paul Ekman's micro-expressions, which are highly contested. Janet Rothwell, an audiologist and former AI researcher, cautions:

I don't think that any system could ever be 100%, and if [the system] gets it wrong, the risk to relationships and life could be catastrophic.

AI vs. Traditional Lie Detection Methods

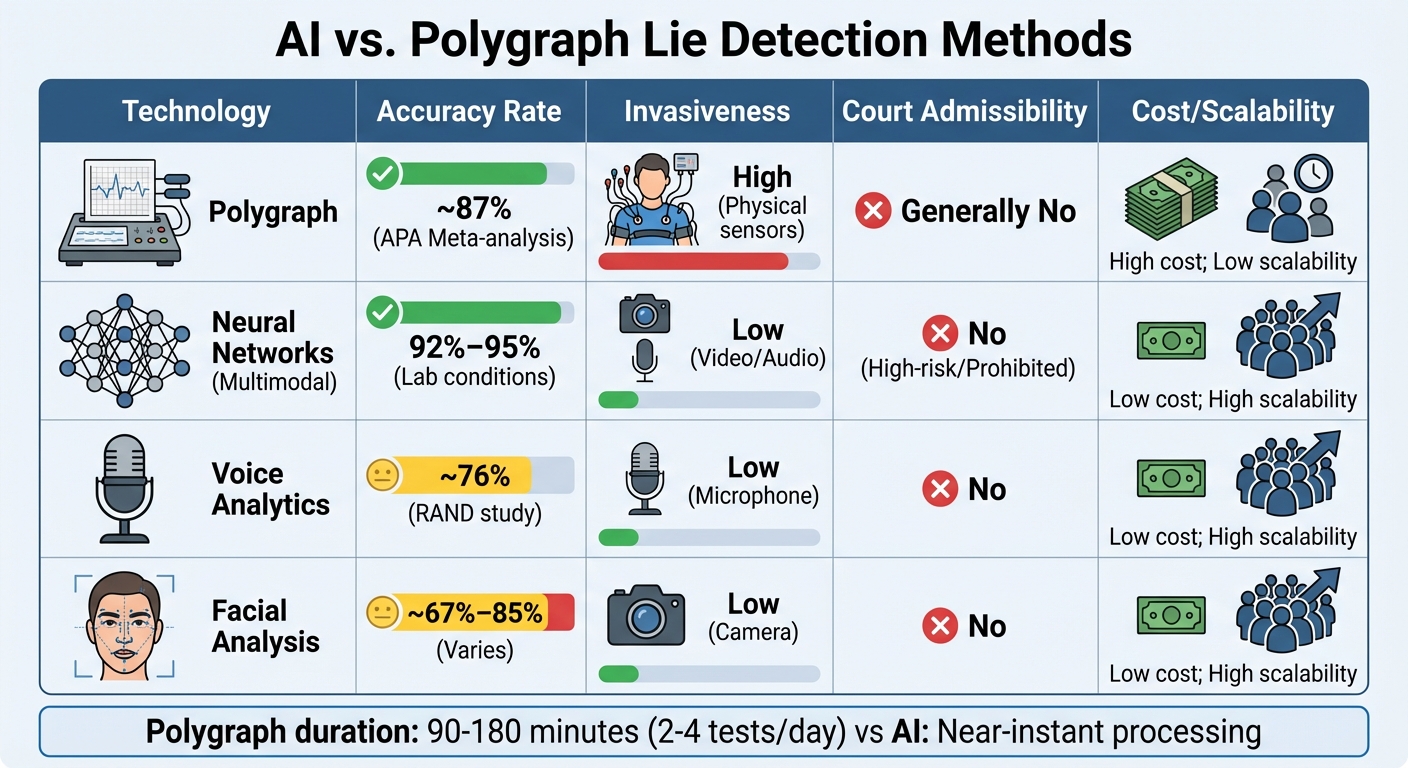

AI vs Polygraph Lie Detection: Accuracy, Cost and Scalability Comparison

This section takes a closer look at how traditional polygraph techniques stack up against newer AI-based methods in detecting deception. Polygraphs rely on measuring physiological responses like heart rate, breathing, and skin conductance, while AI focuses on external cues such as facial micro-expressions, voice tones, and linguistic patterns to identify lies.

Polygraph tests depend on human examiners to interpret physiological data after building rapport with the subject. On the other hand, AI leverages machine learning to analyze subtle patterns across multiple data streams. Studies show that polygraphs have an accuracy rate of about 87%, according to meta-analyses. AI accuracy, however, varies significantly. Some tools report around 67% accuracy, while controlled lab setups using advanced multimodal deep learning models claim up to 95% accuracy. That said, AI's performance tends to drop in real-world scenarios .

Polygraph exams are time-intensive, often lasting between 90 minutes and 3 hours, which limits the number of tests an examiner can conduct - usually just 2 to 4 per day. In contrast, AI systems analyze large volumes of data almost instantly. For instance, the Silent Talker software charges about $10 per minute of video analyzed, making AI a far more scalable and cost-efficient option compared to traditional polygraphs.

Both methods encounter significant legal hurdles. Polygraph results are seldom admissible in U.S. courts unless all parties agree, and AI-based systems face similar skepticism. Under the EU AI Act, emotion recognition technologies are classified as "high-risk", restricting their application in certain contexts. In February 2026, Judge Jed Rakoff of the Southern District of New York remarked:

Claude is not an attorney, and no amount of sophisticated output changes that.

The table below highlights the key differences between AI-based methods and traditional polygraph testing.

Comparison Table: AI vs. Polygraphs

| Technology | Accuracy Rate | Invasiveness | Court Admissibility | Cost/Scalability |

|---|---|---|---|---|

| Polygraph | ~87% (APA Meta-analysis) | High (Physical sensors) | Generally No | High cost; Low scalability |

| Neural Networks (Multimodal) | 92%–95% (Lab) | Low (Video/Audio) | No (High-risk/Prohibited) | Low cost; High scalability |

| Voice Analytics | ~76% (RAND study) | Low (Microphone) | No | Low cost; High scalability |

| Facial Analysis | ~67%–85% (Varies) | Low (Camera) | No | Low cost; High scalability |

The Future of AI in Witness Testimony Analysis

AI-based lie detection technology still faces significant obstacles. While some lab-based multimodal systems boast accuracy rates exceeding 90%, their performance in real-world scenarios often falls short. For instance, in high-stakes cases reviewed by the Deepfake Rapid Response Force, 36% of detection attempts failed due to poor media quality or insufficient training data. These challenges highlight the gap between controlled testing environments and practical courtroom applications.

One of the most critical issues is "lie bias." This refers to a tendency within AI systems to over-detect deception, which can undermine their reliability. David Markowitz, an Associate Professor at Michigan State University, cautions:

It's easy to see why people might want to use AI to spot lies - it seems like a high-tech, potentially fair, and possibly unbiased solution. But our research shows that we're not there yet.

Another pressing challenge is the lack of explainability and transparency in current AI models. Many systems operate as "black boxes", offering no clarity on how they reach their conclusions. This lack of transparency can undermine trust in the technology. Sam Gregory, Executive Director of WITNESS, points out:

Faulty, opaque detection systems erode trust and fuel the spread of AI-generated inaccuracies.

To address these issues, developers must adopt standardized evaluation tools like the TRIED Framework, which measures performance, transparency, accessibility, fairness, durability, and integration with existing legal systems. Additionally, training datasets must reflect the diversity of the "Global Majority" instead of focusing narrowly on specific demographic groups.

Importantly, AI should act as a support tool rather than a replacement for human judgment in evaluating witness credibility. By flagging inconsistencies or potential red flags, AI can assist legal professionals without making definitive judgments. Resolving these challenges is crucial for ensuring AI can complement courtroom practices without undermining the decision-making process of judges and juries.

FAQs

Why does AI label truthful testimony as lies so often?

AI often misclassifies truthful statements as lies because of its current technological limitations. It has difficulty accurately identifying deception versus honesty, leading to mistakes and incorrect assessments. These errors underscore the hurdles AI faces in grasping the intricacies of human behavior and communication.

What would courts need to see before AI lie detection is admissible?

Courts would demand that AI lie detection evidence satisfies legal admissibility standards, such as reliability, scientific validity, and a solid foundation. This typically requires presenting validation studies and expert testimony to prove its accuracy and trustworthiness before it can be used in legal proceedings.

How can investigators use AI safely without biasing a case?

AI can assist investigators in evaluating witness testimony by analyzing language patterns and confidence levels. These tools can highlight potentially reliable statements, offering valuable insights during investigations. However, it's crucial to pair AI with traditional methods, as current technology isn't capable of reliably detecting lies.

To use AI effectively, investigators should remain mindful of its limitations. Results need to be validated against real-world data, ensuring accuracy. Additionally, following best practices is essential to minimize bias and avoid errors when assessing cases. AI is a tool - not a replacement for human judgment.